Return to computing page for the first course APMA0330

Return to computing page for the second course APMA0340

Return to Mathematica tutorial for the first course APMA0330

Return to Mathematica tutorial for the second course APMA0340

Return to Mathematica tutorial for the fourth course APMA0360

Return to the main page for the first course APMA0330

Return to the main page for the second course APMA0340

Return to Part I of the course APMA0340

Introduction to Linear Algebra with Mathematica

Glossary

Preface

In this section, we consider a way to handle equations containing small parameters, denoted by ε or δ. Perturbation theory is a collection of methods for obtaining approximate solutions to equations with a small parameter. The method is applicable to equations of any kind, algebraic, differential or integral, but often the success, or otherwise, the application depends upon the ingenuity applied to the problem---a judicious change of variable can turn a seemingly intractable problem into one that is more manageable.

The main assumption underlying perturbation theory is that the solution is a well-behaved function of ε, the small parameter. A regular perturbation problem is defined as one whose perturbation series is a power series in ε with a non-vanishing radius of convergence; for these problems the perturbed solution smoothly approaches the solution of the unperturbed equation---that is, the equation with ε = 0---as ε → 0.

A singular perturbation problem is one whose perturbation series either does not take the form of a power series, or if it does the power series has a vanishing radius of convergence: for example, it could be an asymptotic expansion. In these problems the equation normally has a different character when ε = 0 than when ε ≠ 0. Such problems are very common and we shall meet them in several different guises. It is surprising that even in these unpromising circumstances perturbation expansions are still useful.

Under some circumstances, perturbation theory is an invalid approach to take. This happens when the system we wish to describe cannot be described by a small perturbation imposed on some simple system. In quantum thermodynamics, for instance, the interaction of quarks with the gluon field cannot be treated perturbatively at low energies because the coupling constant (the expansion parameter) becomes too large, violating the requirement that corrections must be small.

Perturbation theory was developed by various mathematicians and physicists over time, with roots in 18th and 19th-century astronomical calculations. J.-L. Lagrange and P. Laplace were the first to advance the view that the constants which describe the motion of a planet around the Sun are "perturbed" , as it were, by the motion of other planets and vary as a function of time; hence the name "perturbation theory". Lord Rayleigh and Erwin Schrödinger are credited with developing the most frequently used form, Rayleigh-Schrödinger perturbation theory, in the 20th century, particularly for quantum mechanics. Léon Brillouin and https://en.wikipedia.org/wiki/Eugene_Wigner">Eugene Wigner also contributed with their work on Brillouin-Wigner perturbation theory.

The Perturbation analysis

|ψ⟩ ⟨ψ|

On the eve of twentieth century, the French mathematician Jacques-Salomon Hadamard (1865--1963) introduced the following definition.

- The problem has a solution.

- The solution is unique.

- The solution's behavior changes continuously with the auxiliary conditions.

If the problem is well-posed, then there is a good chance to solve this problem numerically on a computer using a stable algorithm. If it is ill-posed, it needs to be re-formulated for numerical treatment. Typically this involves including additional analysis that may lead to auxiliary assumptions, such as smoothness of solution. This process is known as regularization. Tikhonov regularization is one of the most commonly used for regularization of linear ill-posed problems.

The vague third condition in the definition above could be made more precise by rescaling the problem and introducing a small parameter, which we denot by ε or δ. When we model a physical system, the variables and the value of the coefficients that appear in the model equations depend on the physical units being used. For example the length unit might be micron, centimeter, meter, kilometer, light year (i.e., the distance that light travels in one Earth year) and so on. It is obvious then that the use of different units will impact the value of the parameters and coefficients that appear in the model equation. In order to overcome this issue one attempts to find “characteristic values” for the variables that appear in the equations and combinations of these values so that the equations are expressed in terms of “pure numbers” (independent of the physical units). We illustrate this process by a few examples.

Another way to look on this issue is to realize that α is not a dimensionless number since each of the terms in Equation (2.1) must have the same dimension. Therefore α must have the dimension of (mass/length). Hence, the dimensionless form of this constant is (αL/M). ■

In order to clarify the perturbation method, let us consider the simplest example of a single ordinary differential equation

The initial conditions to be imposed on the successive corresponding terms are convenient to choose homogeneous for all of them except the first one:

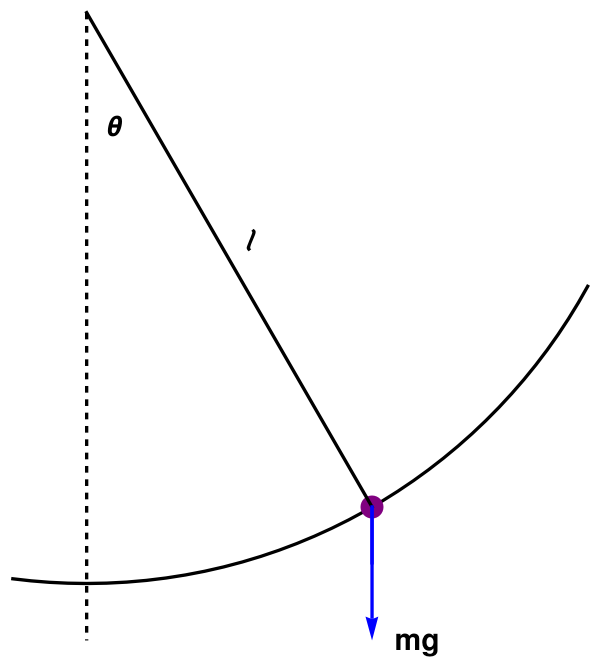

We consider an approximation that lead to derivation of so called simple pendulum model. Let denote by θ(t) the inclination of the rod to the vertical at time t. If s(t) denoted the distance the bob has moved from the vertical in a counterclockwise direction, then θℓ = s. Equating m(d²s/dt²) to the force component that is tangential to the path of the bob has moved, we obtain the following equation of motion: \[ m\ell\,\ddot{\theta} = -mg\,\sin\theta , \] where g is the acceleration due to gravity. The tension along the spring/rod does not enter because it acts normally to the path of the bob.

The equation of motion is convenient to write in the standard (when leading coefficient is 1) form: \[ \ddot{\theta} + \omega_0^2\sin\theta = 0, \qquad \omega_0 = \left( \frac{g}{\ell} \right)^{1/2} . \tag{4.1} \] Its motion is subject to the initial conditions: \[ \theta (0) = a , \qquad \dot{\theta}(0) = \Omega . \tag{4.2} \] Equations (4.2) and (4.2) comprise the initial value problem for simple pendulum motion.

Standard treatment of problem (4.1), (4.2) by perturbation method starts with linear approximation \[ \ddot{\theta} + \omega_0^2 \theta = 0 , \qquad \theta (0) = a, \quad \dot{\theta)(0) = \Omega . \] Its solution is given by

To improve on the approximation, one might write Eq.(4.1) in the form \[ \ddot{\theta} + \omega_0^2 \theta = \omega_0^2 \left( \theta - \sin\theta \right) \] and then obtain a reasonable accurate value of the right-hand side by using the first approximation θ₀ of linear equation (4.3), namely, \[ \omega_0^2 \left( \theta - \sin\theta \right) = \omega_0^2 \left( \theta - \theta + \frac{\theta^3}{3!} - \frac{\theta^5}{5!} + \cdots \right) = \omega_0^2 \frac{\theta^3}{3!} - \cdots , \] is of the order of θ³, while the left-hand side is of the order θ. Thus, the smaller right-hand side can be ignored altogether to obtain an initial approximation θ₀(t). A better approximation should be obtained if substitution θ = θ₀ is made on the right-hand side, etc. Hence, the first attempt at an improved approximation θ₁(t) might be expected to satisfy \[ \ddot{\theta}_1 + \omega_0^2 \theta_1 = \omega_0^2 \left( \theta_0 - \sin\theta_0 \right), \qquad \theta_1 (0) = a , \quad \dot{\theta}_1 (0) = \Omega . \tag{4.5} \] However, an exact evaluation of the right-hand side (4.5) is almost impossible for θ₀. Instead, we replace Eq.(4.5) with the following approximation \[ \ddot{\theta}_1 + \omega_0^2 \theta_1 = \omega_0^2 \, \frac{\theta_0^3}{3!} , \qquad \theta_1 (0) = a , \quad \dot{\theta}_1 (0) = \Omega . \tag{4.6} \] In principle, repetition of the process will provide successively more accurate approximations. Therefore, this method is known as iteration (Latin iterare to do again), or successive approximations. ■

Then the governing equation of motion becomes \[ \ddot{\Theta} + \omega_0^2 \Theta = \omega_0^2 \left( \Theta - \theta^{-1}_m \sin \theta_m \Theta \right) = \omega_0^2 \theta_m^2 \frac{\Theta^3}{6} + \cdots . \] Note that its right-hand side is of order (θm)² because |Θ| ≤ 1 and therefore it is very small compared to unity.

For simplicity, let us assume that the pendulum is released from rest, so Ω = 0. It is physically clear that θm = 𝑎, so our problem becomes \begin{align*} \ddot{\Theta} + \omega_0^2 \Theta &= \omega_0^2 \left( ]Theta - a^{-1} \sin a\Theta \right) \\ &= \omega_0^2 \sum_{n\ge 1} (-1)^{n+1} a^{2n} \frac{\Theta^{2n+1}}{(2n+1)!} , \\ &\quad \Theta (0) = 1, \quad \dot{\Theta}(0) = 0 . \end{align*} We seeks solution of this initial value problem in the following form: \[ \Theta = \Theta (t, a) = \Theta^{(0)} (t) _ a\, \Theta^{(1)} (t) + a^2 \Theta^{(2)} (t) + \cdots \tag{4A.1} \] Upon substitution this series into the differential equation, we obtain a sequence of equations subject to the following initial conditions \begin{align*} \Theta^{(0)} (0) &= 1 , \quad \dot{\Theta}^{(0)} (0) = 0 , \\ \Theta^{(n)} (0) &= 1 , \quad \dot{\Theta}^{(n)} (0) = 0 , \quad \mbox{for} \quad n\ge 1 . \end{align*} In this formulation, the dependence of the solution on amplitude 𝑎 is explicitly demonstrated. Note that if all the higher powers of the amplitude are neglected, we get the usual simple harmonic motion.

To ensure that our method works, one may try to prove convergence of series (4A.1). However, this turns out to be incorrect neither for necessary statement nor foe sufficient one. Even when such series is divergent, it can still be useful as an asymptotic series. On the other hand, convergence does not assure its usefulness. An inordinately large number of terms might have to be summed to obtain reasonable accuracy.

As a matter of fact, it can be shown that series (4A.1) is in general convergent in any finite interval 0 ≤ t ≤ T, provided that 𝑎 is sufficiently small (it follows from analytic dependence of the right-hand side of the differential equation). However, this does not assure usefulness of the solution for many periods, Upon carrying out calculations, one can show that \[ \Theta^{(0)} (t) = \cos\omega_0 t , \qquad \Theta^{(1)} (t) \equiv 0. \] Hence, the next approximation satisfies \[ \ddot{\Theta}^{(2)} + \omega_0^2 \Theta^{(2)} = \frac{\omega_0^2}{6} \,\cos^3 \omega_0 t. \] Using trigonometric identities (or computer algebra system), this equation can be shown to be equivalent to \[ \ddot{\Theta}^{(2)} + \omega_0^2 \Theta^{(2)} = \frac{\omega_0^2}{24} \left( \cos 3 \omega_0 t + 3\,\cos\omega_0 t \right) . \] This is a linear constant coefficient differential equation whose right-hand side contains a "resonant" term cos(ω₀t. Therefore, its solution contains a factor of t: \[ \Theta (t) = \frac{\sin \omega_0 t}{96} \left[ \sin (2\omega_0 t) + 6\,\omega_0 t \right] . \]

However, the term proportional to tsin(ω₀t) holds the center of our interest because it is not uniformly small for all time. Hence, approximation Θ(0) + 𝑎²Θ(2) is an improvement over Θ(0) only for the time interval that Θ(0) itself offers a reasonable approximation. ■

The Poincaré representation of solution Θ(t, 𝑎) is \begin{align*} \Theta &= \Theta^{(0)} (\tau ) + a \, \Theta^{(1)} (\tau ) + a^2 \Theta^{(2)} (\tau ) + \cdots , \tag{4P.1} \\ t &= \tau + a\,t^{(1)} (\tau ) + a^2 t^{(2)} (\tau ) + \cdots , \end{align*} where τ is a parameter. Poincaré's idea was to let solution Θ(0)(τ) take on the simple form (4P.1) in variable τ, but to distort the time scale so that the defects in the solution are removed. We expect to eliminate all secular (seculum means "generation" or "age" in Latin; hence, the word "secular" applies to processes of slow change) terms and to get the correct period with good approximation. The calculations are fairly complicated but can be simplified by noting that series (4P.1) should be in 𝑎².It also turns out that one may take all the correction terms to be a constant multiple of τ, thus, \[ t = \tau \left( 1 + a^2 h_2 \right) , \qquad h2 \mbox{ is a constant}. \] The equation for Θ(2) is then found to be \[ \left( \frac{{\text d}^2}{{\text d}\tau^2} + \omega_0^2 \right) \Theta^{(2)} = 2h_2 \frac{{\text d}^2}{{\text d}\tau^2} \,\Theta^{(0)} + \frac{\omega_0^2}{24} \left( \cos 3\omega_0 \tau + 3\,\cos \omega_0 \tau \right) . \] Using exact solution for Θ(0), the expression above can be reduced to \[ \left( \frac{{\text d}^2}{{\text d}\tau^2} + \omega_0^2 \right) \Theta^{(2)} = \omega_0^2 \left( \frac{1}{8} - 2 h_2 \right) \cos\omega_0\tau + \frac{\omega_0^2}{24}\,\cos 3\omega_0 \tau . \] Although the cosω₀τ term on the right-hand side would lead to an undesirable secular term proportional to τ sinω₀τ, it can be avoided by taking \[ h_2 = \frac{1}{16} . \] The period in &tau becomes 2π/ω₀ to this approximation, but is (2π/ω₀)(1 + 𝑎²h₂) in t.

■The solutions of specific problems is only a prelude to understanding the phenomena. In particular, the lessons learned from attacking a specific problem can often be formulated in terms of general principles and developed into general theory in order to deepen our understanding. However, this approach cannot always be done in a formal manner. The Poincaré theory is a case in point.

The success of the parametric representation introduced by Henri Poincaré has led to its application toother types of ordinary differential equations, for removal of a variety of difficulties of a similar nature. Basically, there is a need to shift the solution surface by a mapping or distortion in the domain of the independent variables. However, it is not fruitful to try to classify all the problems that can be solved by this approach.

- Upon multiplication of the pendulum equation by derivative \( \displaystyle \quad \dot{\theta} , \quad \) show that its first integral is \[ \frac{1}{2} \left( \dot{\theta} \right)^2 = \omega_0^2 \left( \cos\theta - \cos a \right) = 2 \omega_0^2 \left( \sin^2 \frac{a}{2} - \sin^2 \frac{\theta}{2} \right) , \] where 𝑎 is the maximum amplitude. Verify that further transformation \[ \sin\frac{\theta}{2} = \sin\frac{a}{2}\,\sin\psi \] brings the solution into the form \[ t = -\frac{1}{\omega_0} \int_{\pi /2}^{\psi} \left( 1 - k^2 \sin^2 \psi \right)^{-1/2} {\text d}\psi , \qquad \theta (0) = a , \] where \( \displaystyle \quad k^2 = \sin^2 \frac{a}{2} . \)

- Hadamard, Jacques (1902). Sur les problèmes aux dérivées partielles et leur signification physique. Princeton University Bulletin. pp. 49–52.

- Tikhonov, A. N.; Arsenin, V. Y. (1977). Solutions of ill-Posed Problems. New York: Winston. ISBN 0-470-99124-0.

Return to Mathematica page

Return to the main page (APMA0340)

Return to the Part 1 Matrix Algebra

Return to the Part 2 Linear Systems of Ordinary Differential Equations

Return to the Part 3 Non-linear Systems of Ordinary Differential Equations

Return to the Part 4 Numerical Methods

Return to the Part 5 Fourier Series

Return to the Part 6 Partial Differential Equations

Return to the Part 7 Special Functions